In this blog, I avoid writing posts that are heavily product focused since my intention is generally to provide education and interesting information about power products instead of simply promoting our products. However, when we (Agilent) come out with new power products, I think it is appropriate for me to announce them here. So I will tell you about the latest products announced last week, but I also can’t resist writing about some technical aspect related to these products, so I chose to write about autorangers. But first…..a word from our sponsor….

From last week’s press release, Agilent Technologies “introduced seven high-power modules for its popular N6700 modular power system. The new modules expand the ability of test-system integrators and R&D engineers to deliver multiple channels of high power (up to 500 watts) to devices under test.” Here is a link to the entire press release:

http://www.agilent.com/about/newsroom/presrel/2012/17jan-em12002.htmlI honestly think these new power modules are really great additions to the family of N6700 power products we continue to build upon. We have several mainframes in which these power modules can be installed and now offer 34 different power modules that address applications in R&D and in integrated test systems. Oooooppps, I slipped into product promotion mode there for just a short time, but it was because I really believe in this family of products….I hope you will forgive me!

OK, now on to the more fun stuff! Since six of these seven new power modules are autorangers, let’s explore what an autoranger is. Agilent has been designing and selling autorangers since the 1970s (we were Hewlett-Packard back then) starting with the HP 6002A. To understand what an autoranger is, it will be useful to start with an understanding of what a power supply output characteristic is.

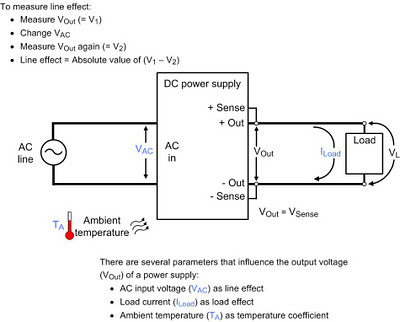

Power supply output characteristicA power supply output characteristic shows the borders of an area containing all valid voltage and current combinations for that particular output. Any voltage-current combination that is inside the output characteristic is a valid operating point for that power supply.

There are three main types of power supply output characteristics: rectangular, multiple-range, and autoranging. The rectangular output characteristic is the most common.

Rectangular output characteristicWhen shown on a voltage-current graph, it should be no surprise that a rectangular output characteristic is shaped like a rectangle. See Figure 1. Maximum power is produced at a single point coincident with the maximum voltage and maximum current values. For example, a 20 V, 5 A, 100 W power supply has a rectangular output characteristic. The voltage can be set to any value from 0 to 20 V, and the current can be set to any value from 0 to 5 A. Since 20 V x 5 A = 100 W, there is a singular maximum power point that occurs at the maximum voltage and current settings.

Multiple-range output characteristic

Multiple-range output characteristicWhen shown on a voltage-current graph, a multiple-range output characteristic looks like several overlapping rectangular output characteristics. Consequently, its maximum power point occurs at multiple voltage-current combinations. Figure 2 shows an example of a multiple-range output characteristic with two ranges also known as a dual-range output characteristic. A power supply with this type of output characteristic has extended output range capabilities when compared to a power supply with a rectangular output characteristic; it can cover more voltage-current combinations without the additional expense, size, and weight of a power supply of higher power. So, even though you can set voltages up to Vmax and currents up to Imax, the combination Vmax/Imax is not a valid operating point. That point is beyond the power capability of the power supply and it is outside the operating characteristic.

Autoranging output characteristic

Autoranging output characteristicWhen shown on a voltage-current graph, an autoranging output characteristic looks like an infinite number of overlapping rectangular output characteristics. A constant power curve (V = P / I = K / I, a hyperbola) connects Pmax occurring at (I1, Vmax) with Pmax occurring at (Imax, V1). See Figure 3.

An autoranger is a power supply that has an autoranging output characteristic. While an autoranger can produce voltage Vmax and current Imax, it cannot produce them at the same time. For example, one of the new power supplies just released by Agilent is the N6755A with maximum ratings of 20 V, 50 A, 500 W. You can tell it does not have a rectangular output characteristic since Vmax x Imax (= 1000 W) is not equal to Pmax (500 W). So you can’t get 20 V and 50 A out at the same time. You can’t tell just from the ratings if the output characteristic is multiple-range or autoranging, but a quick look at the documentation reveals that the N6755A is an autoranger. Figure 4 shows its output characteristic.

Autoranger application advantages

Autoranger application advantagesFor applications that require a large range of output voltages and currents without a corresponding increase in power, an autoranger is a great choice. Here are some example applications where using an autorangers provides an advantage:

• The device under test (DUT) requires a wide range of input voltages and currents, all at roughly the same power level. For example, at maximum power out, a DC/DC converter with a nominal input voltage of 24 V consumes a relatively constant power even though its input voltage can vary from 14 V to 40 V. During testing, this wide range of input voltages creates a correspondingly wide range of input currents even though the power is not changing much.

• There are a variety of different DUTs of similar power consumption, but different voltage and current requirements. Again, different DC/DC converters in the same power family can have nominal input voltages of 12 V, 24 V, or 48 V, resulting in input voltages as low as 9 V (requires a large current), and as high as 72 V (requires a small current). The large voltage and current are both needed, but not at the same time.

• A known change is coming for the DC input requirements without a corresponding change in input power. For example, the input voltage on automotive accessories could be changing from 12 V nominal to 42 V nominal, but the input power requirements will not necessarily change.

• Extra margin on input voltage and current is needed, especially if future test changes are anticipated, but the details are not presently known.